Danny Duncan Collum, author of the novel White Boy, teaches writing at Kentucky State University in Frankfort.

Posts By This Author

I Started Writing This Column 35 Years Ago. Where Are We Now?

Two big things have happened that exploded many of my expectations and drastically altered the cultural landscape.

I WROTE MY first “Eyes and Ears” column in January 1987 when I was a Sojourners staff editor. Over the ensuing years, I’ve changed from Protestant to Catholic, from full-time journalist to full-time teacher, and from city mouse to country mouse. I’ve been married to Polly Duncan Collum and helped raise four children. Through all that, I’ve kept this column going, but now I’m pulling the plug to make way for whatever’s next.

In my first column, I set out a twofold purpose for this space. I intended to track the merger of politics and popular culture that began in earnest with the 1980 election of a movie star president. I noted then that our public life was largely being reduced to an “ephemeral community of shared media experience,” by which, at the time, I meant mostly Hollywood movies and various televised spectacles.

By the time we elected a reality TV star as president, the convergence of politics and popular culture was already long complete, except that, in a world of microtargeted messaging, there is no longer even much “shared media experience” from which to forge a community.

My second rationale for starting the column, however, has held up a little better. I noted way back then that, in both politics and popular culture and in the intellectual netherworld of think tanks and commentary journalism, the very definitions of terms such as “America,” “democracy,” and “Christianity” were up for grabs. In 1987, I called this a “war of ideas” and it continues with a vengeance, though often degenerating into an emotional war of identities.

However, “stuff happens.” And in these 35 years, two big things have happened that exploded many of my expectations and drastically altered the cultural landscape.

Corporate Slacktivism and Performative Marketing

How Coca-Cola kicked off 40 years of “corporate wokeness.”

THE LATEST FAD among some conservative pundits and propagandists is to bash corporate executives who use their positions to promote “politically correct” causes. They call it “corporate wokeness,” and they see it everywhere. However, this is not a new phenomenon.

In 1971, in the backwash of the 1960s, America was very much a country in crisis. Large swaths of our inner cities still bore scars left by the uprisings that followed the assassination of Rev. Martin Luther King Jr. A president elected on a promise to end the Vietnam War was widening it instead. Coca-Cola had the answer to all that trouble and strife. That year, the soda company assembled 500 young people of varied races and nationalities on a hilltop and filmed them singing, “I’d like to teach the world to sing in perfect harmony.” So “corporate wokeness” was born.

Twenty-nine years later, Coca-Cola paid millions of dollars to settle a federal court case accusing it of a historical pattern of systematically underpaying and otherwise discriminating against its Black employees.

In spring 2020, just a few days after a police officer murdered George Floyd, JPMorgan Chase CEO Jamie Dimon and Brian Lamb, the company’s global head of diversity and inclusion, issued a statement that “we are watching, listening and want every single one of you to know we are committed to fighting against racism and discrimination wherever and however it exists.” A week later, Dimon was photographed, with some bank employees, down on one knee in the Colin Kaepernick pose, presumably in an attempted display of solidarity.

‘The Cartel' Behind a Plague of Addiction

"Dopesick" and Purdue Pharma's deadly lies.

THERE'S A MOMENT in the Hulu miniseries Dopesick in which a Drug Enforcement Administration officer walks into her supervisor’s office to talk about the wave of opioid addiction that was, in the early 2000s, already rampant in central Appalachia. Earlier she’d been told that the higher-ups weren’t interested in “pill mill” doctors and pharmacy burglaries. They wanted to go after the cartel. Well, says Agent Bridget Meyer (played by Rosario Dawson), she’s found the cartel—and proceeds to recite the Stamford, Conn., address of Purdue Pharma Inc.

Over the past few years, documents uncovered in various lawsuits have made it clear that Purdue Pharma, privately owned by members of the Sackler family, was “the cartel” behind a plague of addiction and overdose that has so far killed more than a half-million Americans. And the kingpin of this cartel was Purdue’s Richard Sackler, former company president and co-chair of the board of directors.

In 1996, Sackler conceived an ambition to cure the world of chronic pain—and multiply the family fortune—with the “miracle drug” OxyContin, a powerful time-released painkiller. Sackler hired an army of attractive young sales reps and aimed them at small-town doctors in parts of the country with lots of painful workplace injuries from things like logging and coal mining. Misery, dependency, and death followed as the drug spread unchecked like wildfire for an entire decade.

The Danger of Deepfakes

Digital technology consistently outruns our capacity to manage it.

DEEPFAKES—DIGITAL CREATIONS in which people appear to be saying and doing things they never did or said—have been around for a while now, mostly as jokey, obviously satirical clips on the internet. In the past decade, the technology has been widely used in entertainment. Carrie Fisher was faked into Star Wars: The Rise of Skywalker. A hologram of Tupac Shakur headlined the 2012 Coachella festival, one of Whitney Houston is about to play Vegas, etc. But this year, in Roadrunner, a documentary about the late celebrity chef Anthony Bourdain, a line was crossed.

Billy Graham: Tragic Hero?

Confusing the “kingdom of God with the American way of life.”

THE TWO-HOUR PBS documentary Billy Graham ran as part of the “American Experience” series, but it could have been subtitled “An American Tragedy.”

The story Billy Graham tells is mostly one of triumph. A boy grows up on a North Carolina dairy farm, becomes the top Fuller Brush salesman in a two-state territory, then answers a call to preach. His crusades attract more than 200 million people and change hundreds of thousands of lives. However, like all the tragic heroes before him, Billy Graham had a flaw. It was neither lust nor greed, the nemeses of so many evangelists. Instead, as one of the commentators in the documentary tells us, Graham was drawn to power “like a moth to a flame.”

In the 1940s Graham led a movement that dragged evangelical Christianity out of the cultural backwoods and into the mainstream of postwar American life. Graham’s early years provide a road map of that movement as he went from ultra-sectarian Bob Jones University to Florida Bible Institute to Wheaton College. He worked as a staff preacher with Youth for Christ, then began a series of independent evangelistic crusades that started in a tent off Hollywood Boulevard in 1949 and culminated, in 1957, with a 16-week run at Madison Square Garden.

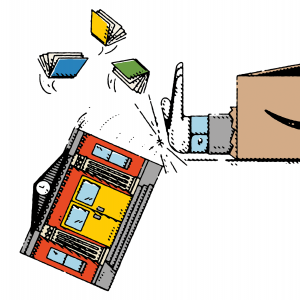

Why Is Jeff Bezos Targeting Public Libraries?

Amazon's challenge to big and dangerous thoughts.

AMAZON FOUNDER JEFF BEZOS has never been seen in a top hat like the guy on the Monopoly board game, but, in every other way, he is a classic monopolist—the very model of a 21st century robber baron.

There’s at least one difference, however, between Bezos and robber barons of the past. While steel baron Andrew Carnegie became famous for building nearly 1,700 public libraries in small towns across the United States, Bezos has turned his wealth and power to strangling them.

One mark of the monopolist has always been predatory pricing—selling an item at a loss to force a competitor out of business. As the first company to perfect an online ordering and delivery system, Amazon used that advantage to destroy its independent, brick-and-mortar retail competition. As rival online merchants emerged, Amazon systematically underpriced them until they shuttered or fled to the “shelter” of the Amazon Marketplace.

Another classic monopolizing strategy is vertical integration—controlling the supply chain from production to point of sale. When streaming video became the next big thing, Amazon didn’t simply start a streaming rental service, it went into the movie production business.

If a Coalition Can Happen Here, It Can Happen Anywhere

An unlikely partnership in Kentucky.

MY BELOVED ADOPTED state of Kentucky doesn’t rank number one in many things. In most measures of health, wealth, or education, we rank somewhere in the mid-40s. However, Louisville’s Courier-Journal recently unearthed a statistic in which Kentucky totally wrecked the curve—number of people per capita arrested for their actions during the Jan. 6 attack at the U.S. Capitol.

As of this writing, Kentucky’s number of apprehended insurrectionists equals that of neighboring Ohio, a state with almost three times our population.

I doubt that surprises many readers, but there’s another side to the Kentucky story that might: There are important voices in the state denouncing the riot—members of the African American community, which is mostly concentrated in our cities, but also members of our other left-out and left-behind community, the mostly white population of Appalachian eastern Kentucky. And there are even signs that some people among those two groups are reaching out to each other.

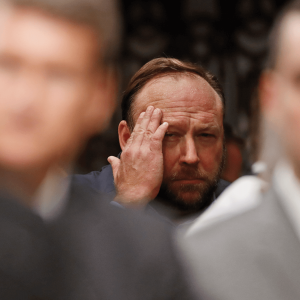

Who Gets to Be a Social Media Gatekeeper?

For years, social media platforms have profited from a business model that ignores truth and promotes outrage.

LIKE THE FRENCH police officer in Casablanca who was “shocked, shocked” to find gambling in Rick’s Cafe, in the wake of the Jan. 6 riot at the U.S. Capitol, social media companies were “shocked” to discover violent anti-Semitic and white nationalist agitators lurking in plain sight on their platforms. With their usual earnest hypocrisy, the companies took action, banning tens of thousands of groups and individuals from the social media universe. Facebook and YouTube suspended Donald Trump’s accounts. Twitter permanently banned him.

Never mind that in the preceding days and weeks those same social media platforms hosted the planning for Jan. 6, or that for years they have profited from a business model that ignores truth and promotes outrage. But when some of their more unruly customers got off the leash and started threatening the people who write antitrust laws, Facebook, Google, and Twitter suddenly became tribunes of civility.

Of course, such monumental hypocrisy from Big Tech gave many Republican politicians the opportunity to change the subject from their own possible complicity in the insurrection to what they claim is suppression of free speech by the liberals in Silicon Valley. To this, clever liberals have replied that the First Amendment only applies to government, not to private corporations.

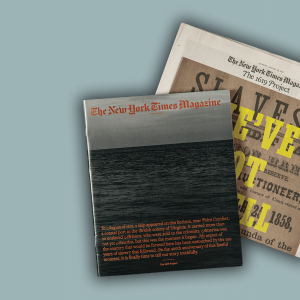

The Birth of America's Class Divisions

Bacon's Rebellion in 1676 reminds us that the concept of race in America began as a way to ensure domination by the wealthy.

IN AUGUST 2019, The New York Times published a special edition of its magazine, with an accompanying podcast, to note the 400th anniversary of the arrival of the first Africans in the Virginia colony. They called the total work “The 1619 Project.” As a Times blurb for the project put it, “American slavery began 400 years ago this month. This is referred to as the country’s original sin, but it is more than that: It is the country’s true origin.”

Almost a year later, “The 1619 Project” became a school history curriculum, and in the waning days of his presidential administration Donald Trump pushed back with plans for a “1776 Commission” to promote “patriotic education” and counter the claim that “America is a wicked and racist nation.”

It’s not surprising that a nation in which everyone has a right to their own facts may end up with two foundings. However, while those who emphasize the centrality of African enslavement in the American story are certainly closer to the truth, both the champions of 1619 and 1776 are missing something crucial. For all the things it got right, “The 1619 Project” over-simplified the origins of the U.S. slave system. As the eminent African American historian Nell Irvin Painter wrote in The Guardian, “People were not enslaved in Virginia in 1619, they were indentured. The [first] Africans were sold and bought as ‘servants’ for a term of years, and they joined a population consisting largely of European indentured servants, mainly poor people from the British Isles.”

Selling Personal Data Should Be Banned

‘The Social Dilemma is here to tell us one big thing: It's worse than we thought.’

THE PAST DECADE has seen an endless trickle of negative stories about social media—data breaches, Russian bots, cyberbullying, digital radicalization, etc.—so by now almost everyone knows that the amusement and convenience those platforms offer come with a downside. But now a new Netflix documentary, The Social Dilemma, is here to tell us one big thing: It’s worse than we thought. In fact, it’s worse than we could have possibly imagined.

In the film this alarm is raised by many of the very people who helped create the systems they now decry. We’re talking about the guy who invented the “Like” button for Facebook, the guy who designed the recommendation engine for YouTube, the fellow who invented the infinite scroll. One after another these mostly white, mostly male characters come on camera to tell us how badly their proudest accomplishments have gone awry.

The big problem these folks warn us about is that our smartphones constantly collect data (what we buy, what music we play, where we are, who we talk to, etc., ad nauseam) and that data is used to fuel a system of targeted alerts, notifications, and recommendations designed to keep us on a site for as long as possible and deliver us to advertisers who also have that data about us.

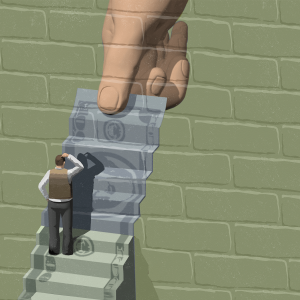

Poorer Than Our Parents?

The wealthy own everything, so when’s the revolution?

OK, I ADMIT IT. I haven’t read Thomas Piketty’s 700-page Capital in the Twenty-First Century, the most talked about economics book of recent decades. There are too many novels in the world, and economics is hard. But not to worry, even for numerophobes like me, documentary filmmaker Justin Pemberton has come to the rescue with a quick and clever 103-minute movie of the same name that lots of people who claim to have read Piketty’s book say is, if a not a sufficient replacement, at least an effective companion.

Despite the title, the bulk of the film covers the 18th, 19th, and 20th centuries: A rotating cast of talking heads (including Piketty’s own) narrate the story of wealth in Europe and North America—from the palace of Versailles (“Royals” by Lorde on the soundtrack) to the slave markets of New Orleans and the happy suburbs of mid-20th century America. All this is illustrated by a montage of clips from movies, includingLes Misérables (the old black-and-white version and the musical), Pride and Prejudice, The Grapes of Wrath, and many more, and Depression-era newsreel footage of striking workers battling police and seizing factories.

Working From Home With No High-Speed Internet

Four presidential administrations have acknowledged the necessity of universal internet access, but none of them have made it happen.

FOR OVER A month now, like everyone else, I have been isolated at home. Here with me are my wife, Polly, and our two youngest sons, both college students. All of us are continuing our work and studies online, and our rural home has become a sort of cyber-monastery. We meet in the morning for daily Mass on YouTube, then peel off to our separate hotspots to toil through the day. We don’t have compline, but we do often reconvene to watch The West Wing.

This isn’t the life any of us would have chosen, but it’s probably good for the soul to surrender some of our precious, almighty power of choice, and it helps that almost everyone is going through this with us. But there’s one aspect of our locked-down life that we can’t attribute to the vagaries of a random virus, and it isn’t shared with most of our fellow citizens. Instead, it’s one of the many rank inequalities the COVID-19 crisis has exposed in American life.

Unlike most of you, we don’t have access to high-speed broadband internet. Two months ago, that might have seemed like a trivial complaint, but not now. And we’re far from alone. The best estimate says that there are about 42 million of us, about 13 percent of the U.S. population, mostly in rural America. Then there are all the people who could have access to broadband, but don’t, mostly because they can’t afford it. All told, about 30 percent of us are out here in the digital cold.

The Catholic Church According to Netflix

There are more than two sides to “The Two Popes.”

ACCORDING TO ARISTOTLE'S Poetics, art is supposed to imitate life. However, Oscar Wilde claimed that life more often imitates art. In the case of the recent Netflix movie The Two Popes and warring camps within the Catholic Church, it may be hard to tell which is which.

The Two Popes —which depicts an imagined relationship between Pope Emeritus Benedict XVI and his successor, Pope Francis—was bound to inflame tensions between those who believe that Francis wants to toss out historic church teachings on marriage and sexuality and those who suspect that anyone with a soft spot for the Latin Mass wants to bring back the Inquisition. Then, within weeks of the movie’s release, we had the spectacle of Benedict appearing as co-author on a book about priestly celibacy that seemed like a timed rebuke to the limited openness to ordaining married men expressed at the Amazon Synod that was called by Francis. Benedict later asked that his name be removed from the book.

Time to Delete Your Church’s Facebook Page?

When we lend our eyeballs to that platform, we help to fund its corrupt and dangerous practices.

BY NOW THE sins of Facebook, as a social media platform and megacorporation, are well-known. You’ve got invasions of privacy, data breaches, viral falsehoods, livestreamed rapes and murders, and the list goes on.

Well, a few months ago, the volunteer technology committee at the Catholic parish where my wife, Polly, works as social responsibility minister did something about it. They asked their parish council to consider taking the congregation off Facebook entirely and no longer using the platform as a medium of communication. When Polly told me about this, I was a little surprised. Maybe I missed something, but, amid the sporadic calls to “Delete Facebook” in the wake of the company’s various scandals, I hadn’t heard of a religious community actually implementing a boycott.

Once you think about it, the arguments for boycotting Facebook are pretty obvious. When we lend our eyeballs to that platform, we bring it advertising dollars, helping to fund its corrupt and dangerous practices. And what’s worse, the company’s business model makes every person or organization with a Facebook page a recruiter for the company and turns every posted detail of our lives into a product (consumer data) that the company can sell to commercial and political advertisers. When a congregation encourages parishioners to log onto a church Facebook page and share what they find there with interested friends, the church places its members and friends at risk of having personal information exposed to bad actors.

What’s the Matter with Our White Working Class?

To understand the white working class, look beyond "Hillbilly Elegy."

DONALD TRUMP'S VICTORY came mostly from non-college-educated whites in the Appalachian parts of Pennsylvania and Ohio and the deindustrialized Rust Belt regions of Michigan and Wisconsin, including many areas that had voted twice for Barack Obama. As this realization dawned, many affluent, educated, bicoastal liberals began to ask: What’s the matter with our white working class? J.D. Vance, who wrote Hillbilly Elegy: A Memoir of a Family and a Culture in Crisis (2016) and grew up in the Rust Belt, in a family still moored to Appalachian Kentucky, turned out to be just the guy to tell the neoliberal elite what it wanted to hear.

Sure America’s industrial economy went to hell in the past four decades, he acknowledged. But Vance said his people haven’t pulled out of that slump because of what he called “hillbilly culture”—which, in his telling, seems to consist mostly of drug and alcohol abuse, hair-trigger violence, and a debilitating tendency to blame others for one’s problems (i.e. the government, coal or steel companies, Obama, etc.). This, of course, is in stark contrast to what Vance did with his own impoverished circumstances: joined the Marines, went to college and law school, and became a Silicon Valley venture capitalist.

Now, Trump is campaigning again, and Vance is back, too, with the pending release of a Hillbilly Elegy movie directed by Ron Howard. In the interim, a steady stream of other books have appeared, offering more systematic reflections on how some in the white working class became angry enough to give us Trump.

White Working Class: Overcoming Class Cluelessness in America , by Joan C. Williams (2017), was one of the first and, given its limitations, best of the books. Williams confesses to her membership in what she calls the Professional-Managerial Elite (PME). But she’s married to a man from working-class origins, a “class migrant,” she calls him, and that’s helped her see the “cluelessness” of her peers. Williams’ message is simple: “When you leave the two-thirds of Americans without college degrees out of your vision of the good life, they notice.”

A Sane Country Would Welcome Them All

"Living Undocumented" follows people whose greatest crime was to believe in the American dream.

IT WAS APRIL 2017, just a couple of months into the Trump era, and our family was at our parish’s Easter vigil—a three-hour-plus Saturday night service that begins with a bonfire and includes the baptism and confirmation of those who’ve spent the last year preparing to enter the church. Our parish has one of the largest Hispanic communities in the area, so our Easter vigils are always bilingual.

By the time we distributed communion, it was around 11 p.m., and as I watched the procession of my Catholic neighbors go by, I was struck by the sight of the brown-skinned men, husbands and fathers in their 20s and 30s, coming down the aisle with sleeping babies cradled tenderly in their arms. They were contradictions to the president’s words: “When Mexico sends its people, they’re not sending their best.”

The recent Netflix documentary series Living Undocumented follows eight families through all nine circles of U.S. immigration hell. The immigrants in the series are from Honduras, Mexico, Colombia, Laos, Mauritania, and Israel. But all of them, even the Laotian guy who picked up a drug felony in his troubled youth, are people any sane country would welcome. And our government is doing everything it can to send them away.

Do We Need Universal Basic Income?

Though it's touted as a solution to our economic woes, universal basic income is a distraction from what workers need most.

WHEN I TOLD my oldest son I was writing about universal basic income (UBI), he said, “All I know is that the Silicon Valley guys are pushing it, so it must be bad.” And he had a point. UBI has entered U.S. political debate most prominently as Silicon Valley’s favorite solution to a problem mostly of its own creation—massive permanent job loss due to artificial intelligence and robotics.

Under a universal basic income policy, all U.S. citizens would receive from the government a regular, permanent payment of, say, $1,000 per month, regardless of their other income or employment status. It wouldn’t get rid of the grotesque income inequality in the U.S. In fact, it wouldn’t even guarantee each person a decent standard of living. But it would get everyone up to the official poverty level.

Tech industry UBI proponents include Facebook CEO Mark Zuckerberg, Tesla founder Elon Musk, and Amazon kingpin Jeff Bezos. But the idea is most identified with former Silicon Valley entrepreneur Andrew Yang, who made it the defining issue of his long-shot campaign for the Democratic presidential nomination.

Still, UBI is an idea much older and bigger than any of its shadier supporters. While the term “universal basic income” is of fairly recent coinage, the idea that every human deserves some share of the earth’s bounty is an old one. In 1797, one of America’s founding philosophes, Thomas Paine, wrote that “the earth, in its natural uncultivated state was, and ever would have continued to be, the common property of the human race.” But, Paine continued, “the system of landed property ... has absorbed the property of all those whom it dispossessed, without providing, as ought to have been done, an indemnification for that loss.”

Paine proposed a single payment at the attainment of adulthood as compensation for the loss of our natural right to the earth. Paine was echoing the ideas of some of the earliest Christian teachers, including St. Ambrose (340-397 C.E.), who wrote: “God has ordered all things ... so that there should be food in common to all, and that the earth should be the common possession of all. Nature, therefore, has produced a common right for all, but greed has made it a right for a few.”

So universal basic income is not just the latest Silicon Valley fad. It’s rooted in an understanding of the origins of wealth and of our obligations to each other that is consistent with both our democratic and religious traditions.

But that still leaves plenty of room for debate about whether UBI is the right solution for America’s most pressing social and economic woes.

How Would UBI Work?

ECONOMIC DEBATE OVER the past 50 years has offered a variety of UBI-type proposals, from Richard Nixon’s negative income tax to the social wealth dividend proposed by some contemporary democratic socialists. The best-known and most-debated current UBI plan is the one proposed by the Yang campaign. This version of UBI rests on three pillars:

First, it is “universal.” Everyone gets it, without conditions—from Warren Buffett down to the apparently able-bodied guy with the “Please Help” sign at the exit ramp. That, of course, raises the first blizzard of objections. Why give money to rich people who don’t need it or purportedly irresponsible people who might waste it?

Paying for UBI would almost certainly involve new taxes on the wealthy, so Warren Buffett wouldn’t be keeping his $1,000 per month. As to the fear of aiding the “undeserving poor,” it’s true that historically most of the meager social benefits offered in the U.S. are means-tested (for those with the very lowest incomes) and conditional upon some form of good behavior (hours worked, clean drug tests, etc.). This has helped create a culture that stigmatizes public benefits as “welfare” and brands beneficiaries as, if not sinful, at least defective.

Social Media Reaps What It Sows

What we get wrong about social media bias

EVERY U.S. PRESIDENT since Richard Nixon has complained about his news coverage. But the man who lives in the White House now is doing something about it.

In August, Politico reported that the Trump administration is drafting an executive order to counter “liberal” bias in story selection and search results on the platforms Facebook, Twitter, and Google (owner of YouTube). According to this report, both the Federal Communications Commission and the Federal Trade Commission may be tasked with enforcing the neutrality of the digital platforms and the algorithms that prioritize stories and topics.

“Social media bias” must work well as a Republican fundraising pitch, because the administration and its allies in Congress have been harping on it for the past year. In September 2018, Twitter chief Jack Dorsey was hauled before the (then-Republican-controlled) House Energy and Commerce Committee and roasted over arcane and unproven claims of his company’s anti-conservative bias. The next day, Jeff Sessions (then still U.S. attorney general) called a meeting of his state-level counterparts to discuss possible actions against the alleged bias.

Lost Causes and Slivers of Hope

Reviewing ‘The Saint of Lost Causes,’ the new Justin Townes Earle album.

APPROPRIATELY ENOUGH, The Saint of Lost Causes —the new Justin Townes Earle album—has an Orthodox icon of St. Jude on the cover. In Catholic lore, St. Jude is the patron saint of lost causes and desperate cases. But if a person can still turn to a saint for intercession, the cause isn’t entirely lost. The desperate act of prayer implies at least a sliver of hope for grace and mercy, and that’s mostly where the people in Earle’s new batch of songs are: down to their last desperate prayer but still hoping.

At the beginning of the album, in the title track, Earle lays it out, singing: “Now it’s a cruel world / But it ain’t hard to understand / You got your sheep, got your shepherds / Got your wolves amongst men.” Over the course of the next 11 songs, we see the world mostly from the point of view of the sheep. We hear from some fracked-out citizens in “Don’t Drink the Water” who are growing increasingly restless as some oil company hack keeps claiming that their poisoned land and water, and the occasional earthquake, are all an “act of God.” Later, in “Flint City Shake It,” a streetwise Michigander fills us in on how General Motors assassinated his still-resilient hometown. Then there’s the junkie desperado of “Appalachian Nightmare” who hopes God can forgive him at the moment of his death.

World Wide Death

We've allowed Big Tech to create a world that enriches a very few, very wealthy investors.

THIS YEAR MARKS the 50th anniversary of the invention of the internet. One day in October 1969, scientists successfully transmitted data from a campus computer at UCLA to a computer at Stanford. Twenty years later, the infrastructure for the World Wide Web went into operation, and the creation of our whole digital universe quickly followed.

Lately, there have been plenty of days that have convinced me that the invention of the internet is one of the worst things that has happened since our first human parents decided that a little bit of “knowledge of good and evil” couldn’t possibly hurt anything.